I had a mate who's still finding their feet with English ask me last week what I meant by something I'd said. Can't even remember the sentence now. Something throwaway. But watching them try to parse it, then watching myself try to explain it, was the thing that stuck with me.

Because the words weren't the problem. The words were fine. What they were missing was everything around the words. Who I was talking about. What we'd been chatting about earlier. The tone. The little half-joke buried in there that only really lands if you've lived in Australia for a bit. I had to unpack about ten minutes of context just to make one sentence make sense.

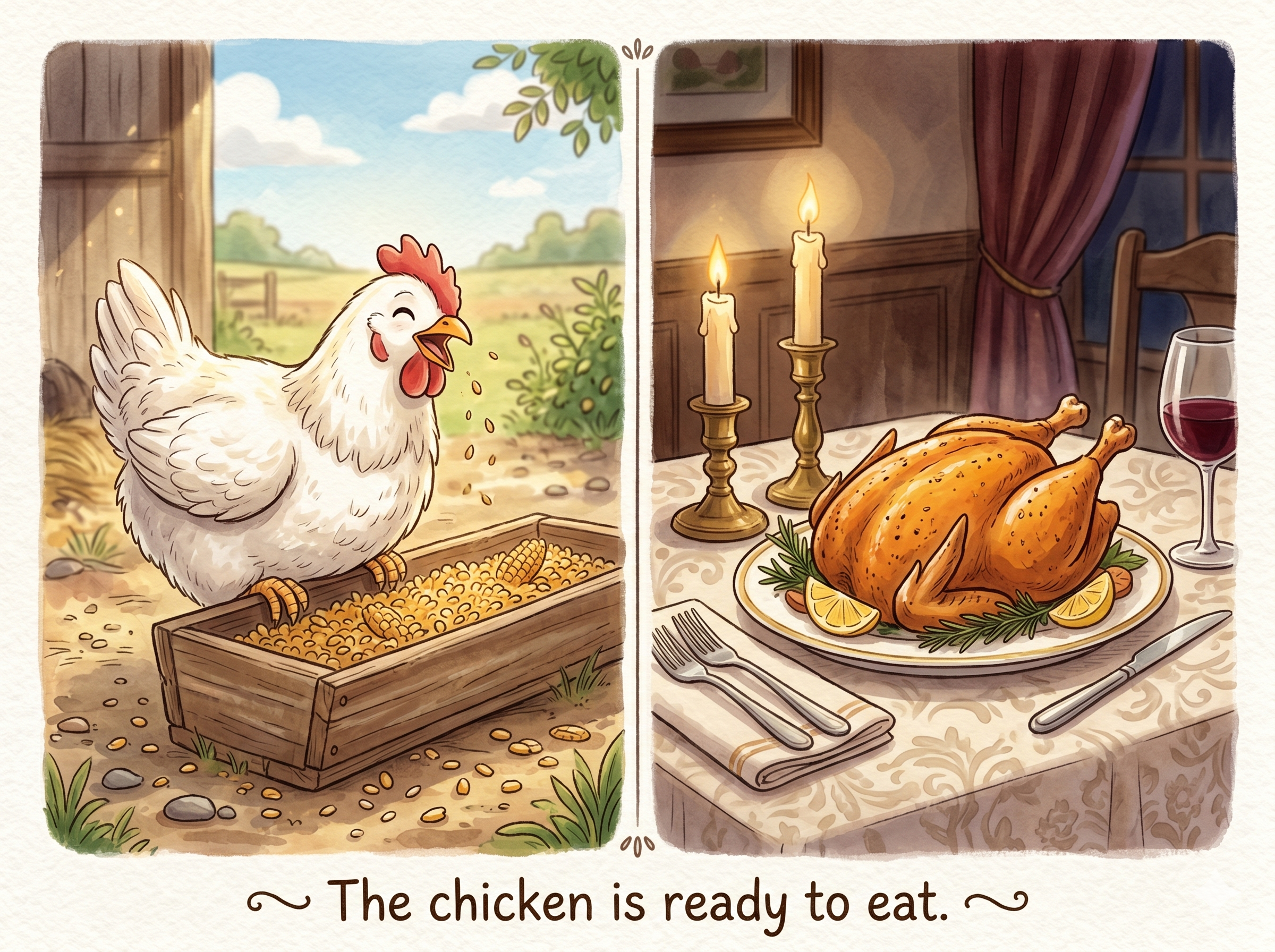

English does this constantly and we never notice. "We need to talk" from your boss isn't the same sentence as "we need to talk" from your mate at the pub. "I'm fine" can mean fine, or the exact opposite, sold entirely on the sigh. None of that is in the words. All of it is in the context.

Which brings me to AI coding tools.

You hand the model a sentence. Sometimes one line. It has to figure out what you actually meant, not what you literally typed. Except it doesn't know which repo you've been swearing at. Doesn't know "the auth thing" is the OAuth flow you rebuilt last Tuesday. Doesn't know your team banned that library, this repo uses tabs, that function is held together by a 2019 comment and hope.

So it guesses. And when it guesses wrong you get a beautifully formatted answer to a problem you never had.

Most of the noise right now is about the model. Bigger, smarter, faster. Fine. But that's not the broken bit for most people. The broken bit is the context. The harness around the model. What it sees, what it has to infer, how much of your actual situation makes it across the gap before it starts typing.

That's what this is about. The room, not the words in it.

Where I started

I've always been in on AI coding, mostly from a curiosity perspective. The early attempts were weak. The model didn't understand best practices, solutions were overdone, first principles were out the window, context windows were tiny. Anything more than a small app or a tidy little refactor was fraught.

I remember a 1:1 with a junior developer back then. She was a bit weird about how long she'd spent on a problem when the solution turned out simpler than she'd thought. We've all been there. There's a lot to be learned from that style of slog. Spending real time on something, braving through, finding the answer, and getting the win at the end. That challenge-carrot-payoff loop is one of the reasons this career still works for me.

It didn't take long for AI tools to start eating into that. I'd recently moved into an Engineering Manager role and was on the tools less anyway. I was using Cursor to build small things on the side. One of them, shakashuffle, was a Quokka-themed planning poker app (the team estimation game where everyone reveals a card at once so the loudest voice doesn't anchor the room). I wanted my own team to use it because every other planning poker tool out there was clinical, joyless, and somehow expensive.

Just getting the landing page to a level I was happy with using Claude 3.5 was a slog. I spent more time steering the model away from regressions and bad patterns than I did making forward progress. Claude 3.7 with autonomous agents was a step up. Bigger context, better awareness of the codebase. Still not enough. I was writing rules in repo docs, scattering comments around, padding prompts with everything I thought it might need, and it would still trip over itself.

I'd played around with a few planning tools. When Amazon Kiro turned up with its style of spec-driven development, I was in. I'd already been deep in refining the flow from idea to implementation. The product vision, the UX research, the iterative narrowing, the task breakdown, the API schemas, the estimations. All the stuff a real team does before code gets written. Spec-driven development felt like a natural extension of that, just for one developer and an agent.

If you're a pure vibe coder, I'll admit, this might look like a lot of work. But if you want to stop the agent hallucinating details you didn't mention, or quietly refactoring three files it wasn't asked to touch, stick with me. This is the harness I use to stay in control of Claude, Kiro, Cursor, or whatever else, all of which (let's be honest) are gently working to abstract this stuff away from you.

I'm no expert. This is just my setup. If you want to get really nerdy, look up the paper Interpretable Context Methodology: Folder Structure as Agentic Architecture by Jake Van Clief and David McDermott. You can also check Eduba, the consultancy where Van Clief and McDermott work.

My setup

For reference. Use whatever you want, this is just mine.

- IDE: WebStorm by JetBrains

- IDE plugin: Claude Code plugin

- LLM/agent: Claude Code

- Repo structure: Turborepo monorepo. Most apps are Next.js + Supabase, with a shared component library, DaisyUI, and the usual bits. The template is open sourced at monorepo-template.

The four skills I've written to keep my development flow honest:

All of them write their artefacts to a .plans/ directory at the root of the monorepo. I use a monorepo so I can save on cloud costs across all my half-finished ideas and share components and patterns through everything. Building gets faster the more I build.

How I actually use it

Same example end to end, because that's the only way the value clicks.

Say I have an idea. A web app that lists campervan-friendly camping locations with their amenities, but social, so people on the road can leave reviews and follow each other. I open Claude Code and prompt:

I have an idea for a web app that finds all the campervan-driveable camping locations and lists out their amenities. I want it to feel social. Create me a PRD for this using the skill.

Here's what happens.

product-requirements

---

name: prd-plan

description: Iteratively plan a Product Requirements Document (PRD) with the user via in-file checkbox Q&A, folding answers into the spec until signed off. Use when the user asks to "plan PRD-XXX", "create a PRD for X", "work on PRD-003", "iterate on PRD-XXX", or to turn a rough product idea into a fully specified PRD. PRDs are numbered sequentially (PRD-001, PRD-002, …) just like FDs.

metadata:

argument-hint: <PRD-XXX id, product name, or plan file path>

---

# PRD Plan Iteration

This skill runs a tight, in-file question-and-answer loop that builds and refines a Product Requirements Document (PRD)

until the user has signed it off. No code is written while this skill is running. The goal is a spec where every product

decision is explicit, every open question is closed, and every user correction has been folded back into the relevant

section(s) of the document, not just recorded as an answer.

A PRD is a complete product spec: who uses it, what it does, what the data model looks like, how the UX works, and how

the build is phased. It is written once, up front, and is the authoritative reference for the entire product build.

## When to use

Invoke this skill when:

- The user says "plan PRD-XXX", "create a PRD for X", "let's work on PRD-XXX", "iterate on PRD-XXX", "flesh out

PRD-XXX", or similar.

- The user has a rough product idea or an existing `.plans/product-requirements-document/` file they want to fully

specify.

- The user says "I answered the open questions, review and update", that's the middle of this loop; resume it.

Do NOT invoke this skill when:

- The user is asking a quick question about an existing PRD. Just read it and answer.

- The user has asked for code changes directly. This skill never writes code.

## PRD document structure

Every PRD lives under `.plans/product-requirements-document/PRD-XXX - {slug}.md`, where `XXX` is a zero-padded

sequential number (PRD-001, PRD-002, …). The numbering mirrors FDs: check the existing files in

`.plans/product-requirements-document/` and pick the next number. A template lives at

`.plans/product-requirements-document/001-TEMPLATE.md`, copy it as the starting point for a new PRD. A complete PRD has

these sections (in order):

```

# PRD-XXX: {Product Name}

## Status

- [x] Open

- [ ] In-progress

- [ ] Partly implemented

- [ ] Done

## Overview

One paragraph: what the product is, who it's for, and the core problem it solves.

## Tech Stack

Framework, database, auth, any third-party services. Match existing monorepo patterns unless there's a reason not to.

## Users

Who uses this? What role(s) do they have? Is there public access? Authentication approach?

## Features

One H3 per major feature area. Each feature area lists what users can do (CRUD verbs, filters, actions). No implementation detail, just capabilities.

## Data Model

One table per entity showing Field, Type, Details. Include enums with their allowed values. Note FK relationships.

## UX Design

### Design Principles

2–4 one-liners that define the feel and priorities of the UI.

### Layout

ASCII wireframe(s) for the primary view(s). One diagram per major screen or state.

### Key Interactions

Named subsections for the most complex or non-obvious interactions (e.g. slide-out panel, inline confirmation, email generator drawer).

## Implementation Plan

Numbered phases, each with a short title and a bullet list of what gets built. Phases should be independently deployable or at least independently testable.

## Open Questions

Active Q&A area (see format below). Moves to Decision Trail once all answered.

## Decision Trail

Table of resolved decisions (columns: ✅, Question, Decision, Why).

```

## Locating or creating the plan file

1. If the user passed a file path, read it.

2. If they passed an ID (e.g. `PRD-003`, `003`) or slug (e.g. `product-database`), glob for it under

`.plans/product-requirements-document/` and pick the matching file. Ignore `*TEMPLATE*` files.

3. If no file exists yet:

- Look at existing `PRD-XXX - *.md` files in `.plans/product-requirements-document/` and pick the next sequential

number (zero-padded to three digits).

- Read `.plans/product-requirements-document/001-TEMPLATE.md` and use it as the skeleton.

- Create the new file at `.plans/product-requirements-document/PRD-XXX - {slug}.md`, filling in what you know from

the user's description, and leaving sections as `_TBD_` placeholders where you need answers.

4. Confirm the file path and the assigned PRD number in your first reply so the user can correct you before you start

editing.

## The iteration loop

Each cycle has four beats:

### Beat 1: Read current state

Read the full PRD file. Identify:

- Which sections are still vague, incomplete, or contradicting each other

- What's answered vs open in the Q&A section

- Any checkboxes the user has ticked or notes they've added since the last read

- Any new requirements the user added directly into prose sections

### Beat 2: Process user answers

If the user has answered questions since last time:

- Fold each answer into EVERY section of the PRD it affects, not just the questions area. If an answer changes the data

model, rewrite the Data Model section. If it changes the layout, update the wireframe. If it changes what phases look

like, update the Implementation Plan.

- A decision is "processed" only when the PRD reads consistently as if the decision was always there. Leaving a resolved

answer orphaned in the Open Questions area while the main spec still says something contradictory is a failure mode,

do not do it.

- Watch for answers that contradict things written earlier. Reconcile explicitly; never silently keep the old wording.

- Watch for answers that reveal a project-wide preference (e.g. a locale/spelling rule, a tooling convention). These

belong in memory via the auto-memory system, not just in this one PRD.

### Beat 3: Raise new questions

Surface new questions **in the PRD file**, NOT just in chat. The user works through these in their IDE and the PRD is

the durable artefact.

**In-file question format:**

For a question with discrete options, use checkboxes the user can tick directly in the file:

```markdown

#### QX. {short question title}

{One or two sentences explaining the question and why it matters for the product.}

- [ ] Option A: {short description of what this option means and when it's right}

- [ ] Option B: {short description}

- [ ] Option C: {short description}

- [ ] Other (notes):

- _{your note here}_

**Notes / reasoning:**

- _{anything you want me to know about the pick}_

```

Rules for writing questions:

- **Every question gets a "Notes" slot.** The user can always fill it with an alternative or a reason.

- **Give your current recommendation inline.** If you think Option B is right, say so in Option B's description and

explain the tradeoff in one line.

- **Never ask the user to pick between options you haven't described.** Vague questions like "how should we handle X?"

are a failure mode, always offer concrete options.

- **Don't ask questions whose answers are already derivable from the codebase.** Read existing apps first. Ask only for

judgement calls (scope, UX direction, priorities, business rules), not facts you can grep for.

- **Questions have IDs** (Q1, Q1a, Q1b, Q2, ...) so conversation can reference them precisely.

- **Cap one round at ~3 questions.** More than that is a survey, not iteration. Pick the ones that unblock the most

other decisions first.

- **PRD questions focus on product decisions**, not implementation detail. Good PRD questions: "Should this be

single-user or multi-role?", "Is archive soft-delete or hard-delete?", "Should filters persist across sessions?". Bad

PRD questions: "Which framework primitive should we use for this?", "Should this run on the server or the client?"

### Beat 4: Report back and wait

Send the user a terse message (≤120 words) summarising:

- What you changed in the PRD based on their answers

- What new questions you raised (by ID) and where to find them in the file

- One unilateral decision you made if you had to make one, flag it so they can override

Then stop. The user will either answer the new questions (another cycle begins) or sign off.

## Managing the decision trail

- Under `## Open Questions`, only keep questions that are actually still open (unchecked or partially answered).

- Move resolved questions to `## Decision Trail` as a table: columns `✅ | Question | Decision | Why`. Keep each Why cell

to one sentence.

- Order the table chronologically, the order carries information about how the design evolved.

- When a question is resolved, fold the answer into the main PRD sections FIRST, then move the question to the decision

table. Never skip the fold-in step.

## Sign-off gate

You are finished ONLY when:

1. Every question in the file is answered (no unticked checkboxes, no `{your note here}` placeholders the user was

expected to fill).

2. All PRD sections are complete and self-consistent, Overview, Tech Stack, Users, Features, Data Model, UX Design, and

Implementation Plan all say consistent things. A developer could read this PRD cold and know what they're building.

3. The user has explicitly confirmed they want to proceed. Common sign-off phrases: "looks good", "go", "approved", "

ship it", "done". If the user's latest message doesn't clearly sign off, ask: "Is this PRD ready, or do you want

another pass?", one sentence, then stop.

Until all three are true, stay in the loop. Do not write any code. Do not read implementation files unless needed to

answer a planning question.

## Hard rules

- **No code edits during this skill.** Only edits to the PRD file and (where justified) the user's memory system.

- **Always fold answers into the main PRD sections.** Orphaned answers in the Open Questions area while the rest of the

PRD is stale is the most common failure mode, guard against it.

- **Never hide questions from the user by asking them only in chat.** If it's a product decision, it goes in the file

with a notes slot. Chat is for terse status updates between cycles.

- **Match the tech stack and patterns of the existing project** unless the user explicitly says otherwise. Read

`CLAUDE.md` (or equivalent project conventions doc) before raising a tech-stack question.

- **Wireframes are mandatory.** Every PRD must have at least one ASCII wireframe for the primary view before sign-off.

If none exists after the first cycle, add a skeleton one and ask the user to correct it.

- **Implementation Plan must have phases.** Each phase should be independently testable. If the user hasn't given phase

breakdown, propose one based on the feature list, it's easier to react than to generate from scratch.

- **Respect project-wide preferences** stored in memory. Default to those preferences; only raise a question if there's

genuine tension with the product's needs.

- **Keep update messages terse.** The PRD file is the durable artefact. Chat messages between cycles should be ≤120

words and never repeat content that's already in the file.

- **Ask before guessing.** If an answer is ambiguous, raise it as a new question in the next cycle rather than picking

unilaterally. If you must pick unilaterally because the decision is tiny and blocks progress, flag it explicitly in

the chat summary so the user can override.

## Example cycle

Initial state: user says "plan a PRD for {some new product}". No file exists yet.

Cycle 1:

- Create `.plans/product-requirements-document/PRD-XXX - {slug}.md` with skeleton sections filled from the user's

description.

- Identify three big unknowns: who the users are, the CRUD scope, and the boundary of one fuzzy feature area.

- Add Q1 (user roles), Q2 (CRUD scope), Q3 (the fuzzy feature's boundary).

- Send chat: "Created PRD skeleton at `.plans/product-requirements-document/PRD-XXX - {slug}.md`. Three questions at

the bottom, Q1 is the main unlock, it shapes the auth model and most of the UX."

- Stop.

Cycle 2 (user ticks answers and adds notes):

- Read the file.

- Fold answers into Overview, Users, Features, and UX sections. Update the wireframe to reflect the layout the user

described.

- Raise Q4 (a follow-on decision unblocked by the previous answers).

- Move Q1–Q3 to Decision Trail.

- Send chat: "Folded your answers into sections 3–5, updated wireframe. One new question: Q4, take a look."

- Stop.

Cycle N (user says "looks good"):

- Verify sign-off gate.

- Confirm in chat: "PRD signed off. Ready to start building when you are."

- Exit the skill.

The PRD skill checks .plans/product-requirements-document/ to see what's already in there, picks the next number, reads the template, and creates a new file at .plans/product-requirements-document/PRD-001 - campervan-social.md. The skeleton lands with everything it can fill from my one-paragraph prompt. An Overview. A guess at the Tech Stack based on the repo conventions. A stub for Users. A Features list with the obvious bits. Data Model, UX Design, and Implementation Plan as placeholders.

What it doesn't do is fire twenty questions at me in chat. It adds three checkbox questions at the bottom of the PRD file. Something like:

#### Q1. Account model

Should reviews and posts be tied to accounts, or can people contribute anonymously?

- [ ] Accounts only (recommended, gives you moderation, abuse handling, trust signals)

- [ ] Anonymous browsing, accounts to post

- [ ] Fully anonymous with a captcha gate

- [ ] Other, notes:

- _your note here_

**Notes / reasoning:**

- _anything you want me to know about the pick_I tick a box, write a note if I want, save the file. The skill reads the file again, folds my answer into the Users section, the Features section, and the Implementation Plan. Then it raises whatever follow-on questions my answer unlocked. Q1 about accounts might open Q4 about which auth provider, Q5 about whether usernames are unique. The loop runs until every question is answered, the spec is internally consistent, and I sign off.

That's skill one. It saves me from the most expensive failure mode in AI coding: building something nobody asked for, beautifully.

feature-development

---

name: fd-plan

description: Iteratively plan a feature/fix design (FD) document with the user via in-file checkbox Q&A, folding answers into the spec until signed off. Use when the user asks you to "plan FD-XXX", "work on FD-XXX", "iterate on FD-XXX", or to turn a rough plan document into a fully specified one before any code is written.

metadata:

argument-hint: <FD-id or plan file path>

---

# FD Plan Iteration

This skill runs a tight, in-file question-and-answer loop that refines a plan document (an "FD", feature design / fix

design) until the user has signed it off. No code is written while this skill is running. The goal is to reach a spec

where every decision is explicit, every open question is closed, and every user correction has been folded back into the

relevant section(s) of the document, not just recorded as an answer.

## When to use

Invoke this skill when:

- The user says "plan FD-XXX", "let's work on FD-XXX", "iterate on FD-XXX", "flesh out FD-XXX", "review my answers in

FD-XXX", or similar.

- A plan document exists under `.plans/` (typically `.plans/feature-development/FD-XXX - ....md` but also

`.plans/product-requirements-document/...`, `.plans/*.md`) and the user wants to refine it before implementing.

- The user says "I answered the open questions, review and update", that's the middle of this loop, resume it.

Do NOT invoke this skill when:

- The user has asked for code changes directly. This skill never writes code.

- The user is asking a quick question about an existing FD. Just read it and answer.

## Locating the plan file

1. If the user passed an argument that looks like a file path, read that path.

2. If they passed an ID (e.g. `FD-005`, `PRD-foo`, `005`), glob for it under `.plans/**/` and pick the matching file.

Ignore `*TEMPLATE*` files.

3. If nothing matches or there are multiple candidates, ask the user which file they meant. Do not guess.

4. Always confirm the file path in your first reply so the user can correct you before you start editing.

## The iteration loop

Each cycle of the loop has four beats:

### Beat 1: Read current state

Read the full plan file. Identify:

- Which sections of the spec are still vague (hand-wavy, TODO-ish, or contradicting each other)

- The current "Open questions" / "Decisions" / similar section, what's answered, what isn't

- Any checkboxes the user has ticked or notes they've added since the last read

- Any new requirements the user added directly into prose sections

### Beat 2: Process user answers

If the user has answered questions since last time:

- Fold each answer into EVERY section of the spec it affects, not just the questions area. If an answer changes the

label rules, rewrite the Label Rules section. If it changes data fetching, rewrite the Data section. If it changes

click behaviour, rewrite the Click Handler section.

- A decision is "processed" only when the spec reads consistently as if the decision was always there. Leaving a

resolved answer orphaned in the Open Questions area while the main spec still says something contradictory is a

failure mode, do not do it.

- Watch for answers that contradict things you wrote earlier in this skill's run. Reconcile explicitly; never silently

keep the old wording.

- Watch for answers that reveal a project-wide preference (e.g. a coding-style rule, a tooling convention, a copy/locale

rule). These belong in memory via the auto-memory system, not just in this one FD.

### Beat 3: Raise new questions

Answers almost always raise new questions. Surface them **in the plan file** using the in-file format below, NOT just

in chat. The user works through these in their IDE and the plan is the durable artefact.

**In-file question format:**

For a question with discrete options, use checkboxes the user can tick directly in the file:

```markdown

#### QX. {short question title}

{One or two sentences explaining the question and why it matters.}

- [ ] Option A: {short description of what this option means and when it's right}

- [ ] Option B: {short description}

- [ ] Option C: {short description}

- [ ] Other (notes):

- _{your note here}_

**Notes / reasoning:**

- _{anything you want me to know about the pick}_

```

Rules for writing questions:

- **Every question gets a "Notes" slot.** The user can always fill it with an alternative or a reason.

- **Give your current recommendation inline.** If you think Option B is right, say so in Option B's description and

explain the tradeoff in one line. The user is busy; don't make them derive your opinion from scratch.

- **Never ask the user to pick between options you haven't described.** Vague questions like "how should we handle X?"

are a failure mode, always offer concrete options.

- **Don't ask questions whose answers are already derivable from the codebase.** Read the code first. Ask the user only

for judgement calls (UX choices, priorities, scope), not facts you could grep for.

- **Questions have IDs** (Q1, Q1a, Q1b, Q2, ...) so subsequent conversation can reference them precisely.

- **Cap one round at ~3 questions.** More than that and you're not iterating, you're running a survey. If you have more,

pick the ones that unblock the most other decisions first.

### Beat 4: Report back and wait

Send the user a terse message (≤120 words) summarising:

- What you changed in the spec based on their answers

- What new questions you raised (by ID) and where to find them in the file

- One unilateral decision you made if you had to make one, flag it so they can override

Then stop. The user will either answer the new questions (another cycle begins) or sign off.

## Managing the decision trail

Over multiple cycles the plan accumulates answered questions. Keep them visible but compact so the document doesn't

bloat:

- Under `## Open questions`, only keep questions that are actually still open (unchecked or partially answered).

Everything resolved moves to a "Decisions" or "Decision trail" section.

- Use a **table format** for resolved decisions, the user has expressed this preference (columns: ✅, Question,

Decision, Why). Keep each Why cell to one sentence so the table scans fast.

- Order the table by when each question was raised, not alphabetically. The order itself carries information about how

the design evolved.

- When a question is resolved, fold the answer into the main spec FIRST, then move the question to the decisions table.

Never skip the fold-in step.

## Risks section, always a table, always linked to tests

Once the spec is fleshed out enough that you're naming specific files, libraries, and framework primitives, you are ALSO

responsible for surfacing risks and new issues the chosen approach would introduce. These are not questions, they are

footguns you discovered during investigation that the user should see before implementation starts.

Surface them as a `## Risks & new issues surfaced by this investigation` section with a **table**, not a list of prose

paragraphs. Columns:

| ID | Risk | Mitigation | Verification |

|----|------|------------|--------------|

Rules:

- **IDs are `R1`, `R2`, …** and are referenced elsewhere in the doc (e.g. from Files to Modify, from Decisions, from the

Verification section).

- **Each row has at least one test ID** in the Verification column (`V1`, `V2`, …). If a risk genuinely cannot be

tested (e.g. "pre-existing limitation, documented baseline"), write `V_ (informational)` and add a corresponding entry

in the Verification section that records the known baseline.

- **Verification section mirrors the table**: every `Vn` referenced in the risks column must exist as a subsection under

`## Verification`, with the list of checks for that test. The Verification section header should say "Every risk Rn

below has at least one test. Test IDs are tagged with the risks they cover."

- **Mitigations are concrete actions**, not "be careful", point at a specific code comment, a Playwright test, a config

flag, or a resolved question (e.g. "Resolved by Qb option 2").

- **Order by severity then discovery order.** Highest-blast-radius risks first (compile-time blockers, data loss,

security). Informational / accepted-tradeoff risks last.

- **A risk that's resolved by an answered question cites that question** in the Mitigation cell ("Resolved by Qb (option

2)") so the audit trail is readable.

- **Don't repeat content** between the risks table and the Verification tests, the table is the index, the Verification

section has the actual steps.

When to add risks vs when to raise questions: if the risk requires a user decision, raise it as a numbered question (Qa,

Qb, …). If the risk is a gotcha with a clear mitigation, it goes in the table. A question can graduate to a risk once

the user answers it, keep the risk row (referencing the answered question) so the audit trail stays intact.

Watch for risks in these categories:

- **Config/flag prerequisites** (framework feature requires opt-in).

- **Silent coexistence issues** (new system + old system; what's the invalidation boundary?).

- **UX regressions from the refactor itself** (removing a lift breaks a live hint).

- **Cache-staleness windows** (SWR bought you speed, but users might see stale state for N seconds).

- **Cross-boundary cancellation/error propagation** (server actions, transitions, error boundaries).

- **State preservation across rollbacks** (optimistic updates, transitions, navigation).

- **Performance cliffs under cold cache / rate limits**.

- **Backward compatibility with saved user state** (password managers, sessionStorage, URL params).

## Sign-off gate

You are finished ONLY when:

1. Every question in the file is answered (no unticked checkboxes in open questions, no `{your note here}` placeholders

the user was expected to fill).

2. The spec sections (Problem, Data, Label rules, Click handler, Files to modify, Verification, etc.) are

self-consistent, you could hand the document to someone cold and they could implement it.

3. The user has explicitly confirmed they want to proceed. Common sign-off phrases: "looks good, start coding", "go", "

implement it", "ship it", "approved". If the user's latest message doesn't clearly sign off, ask: "Is this ready to

implement, or do you want another pass?", one sentence, then stop.

Until all three are true, stay in the loop. Do not write any code. Do not start edits to the implementation files. Do

not even read the implementation files unless you need them to answer a planning question.

## Hard rules

- **No code edits during this skill.** Only edits to the plan file and (where justified) to the user's memory system.

- **Always fold answers into the main spec.** Orphaned answers in an "Open questions" area while the rest of the spec is

stale is the most common failure mode of this skill, guard against it.

- **Never hide questions from the user by asking them only in chat.** If it's a decision that shapes the spec, it goes

in the file with a notes slot. Chat is for terse status updates between cycles.

- **Respect project-wide preferences** stored in memory. If a question touches one of them, default to the memory's

answer and only raise the question if there's a real tension.

- **Watch for project-wide feedback** while iterating. If the user tells you something that clearly applies beyond this

FD ("I prefer X over Y everywhere"), save it to auto-memory in the same cycle you're folding it into the spec, don't

wait for a separate invitation.

- **Keep update messages terse.** The plan file is the durable artefact. Chat messages between cycles should be ≤120

words and never repeat content that's already in the file.

- **Ask before guessing.** If an answer is ambiguous, raise it as a new question in the next cycle rather than picking

unilaterally. If you must pick unilaterally because the decision is tiny and blocks progress, flag it explicitly in

the chat summary so the user can override.

- **Risks are a table, not prose, and every row cites a test.** See "Risks section" above. Failing to tie each risk to a

`Vn` test ID in the Verification section is a failure mode, the whole point of documenting the risk is to ensure it

gets tested.

## Example cycle

Initial state: `.plans/feature-development/FD-XXX - {app} - {short slug}.md` exists with a rough problem statement, no

open questions, no data section.

Cycle 1:

- Read the file.

- Spec is sparse; a key sub-system (e.g. where state is persisted) isn't specified.

- Add an "Open questions" section with Q1 (the primary unlock, pick from a small set of concrete options), Q2 (a scope

question that depends on Q1), Q3 (a follow-on edge case). Each has checkbox options + notes slots + your

recommendation inline.

- Send a chat message: "Drafted 3 questions at the bottom of FD-XXX. Q1 is the main unlock, tick one and I'll build the

rest around your choice."

- Stop.

Cycle 2 (user ticks Q1's recommended option and adds a note on Q2):

- Read the file.

- Fold the chosen option into the relevant spec section, update "Files to Modify" to mention the affected modules,

update Verification to include the new checks.

- Process Q2's note as a partial answer; raise Q2a as a follow-up sub-question.

- Move Q1 to a new "Decisions" table.

- Send a chat message: "Folded the Q1 decision into sections 3 and 5. Raised Q2a as a follow-up, take a look."

- Stop.

Cycle N (user says "looks good, ship it"):

- Verify sign-off gate conditions.

- Confirm in chat: "Signed off. Switching out of planning mode, want me to start the implementation now?"

- Exit the skill. Implementation is a separate task.

Signed-off PRD in hand, I'm not going to give the agent the whole document and say "build it." That's too much rope. I pick one feature. "Find a campsite within 30 minutes of my current location, filter by amenities."

Then:

Plan an fd location search feature based in campervan-social

Same loop, different output. The skill creates .plans/feature-development/FD-001 - campervan - location-search.md, pulls the relevant constraints from the PRD, identifies the modules it'll touch (a server action, a client filter component, a Supabase query), and writes a Files to Modify section.

The bit I really like is the Risks table. Once it's investigated the codebase, it surfaces footguns it found while looking around. Stale-cache windows. A coexistence issue between an old hook and a new one. A spelling drift between en_AU and en_US in existing copy. Each risk row is tied to a verification step in a tests section, so nothing gets flagged without a plan to actually catch it. By the time I sign the FD off, I have a document I could hand to a contractor on the other side of the world and they'd build the same thing.

code-review

---

name: principal-code-review

description: Review code changes with the judgement of a principal engineer who knows this monorepo intimately. Use when the user asks for a code review, says "what do you think of this", asks for feedback on a PR/diff/branch/recent commits, says "is this any good", "before I merge", "sanity check this", or pastes a diff and asks to review uncommitted changes.

---

# Principal Code Review

Review the pending or specified changes with the voice of an opinionated principal engineer who built and ships this codebase. Output is a markdown file in `.plans/code-review/` plus an inline verdict summary.

## When to trigger

Activate when the user asks for a code review, asks "what do you think of this", asks for feedback on a PR, diff, branch, or recent commits, or says things like "is this any good", "before I merge", "sanity check this". Also trigger when the user pastes a diff or asks to review uncommitted changes (`git diff`, `git diff --staged`, `git diff main...HEAD`).

## Reviewer mindset

Adopt the voice of an opinionated principal who has built and shipped this codebase. Direct, specific, no hedging. Comments should sound like a senior reviewing a colleague's PR, not a checklist. If something is fine, say it's fine and move on. If something is wrong, say why and what you'd do instead. No "consider doing X" weasel-wording when you mean "do X".

## Stack the reviewer must know

Before reviewing, identify the project's stack and conventions from `CLAUDE.md` (or equivalent project docs), the

package manifests, and the source layout. Note framework versions, language strictness settings, locale/spelling rules,

and any project-specific design-token or styling conventions.

## How to gather the diff

1. If the user pasted a diff, review that.

2. Otherwise run `git status` and `git diff` / `git diff --staged` / `git diff main...HEAD` as appropriate for what the user asked about ("uncommitted" → unstaged + staged; "this branch" → `main...HEAD`; "my PR" → `main...HEAD`).

3. Read the actual files at their current state for full context, diffs miss surrounding code.

4. If the change touches a project-specific design-token or styling system, re-read the relevant token/global stylesheet to verify usage is correct.

## Severity model

Use four levels in this exact order:

- Blocker: must fix before merge (broken behaviour, security, data loss, sensitive-data exposure, build break, hard violation of a project-wide convention)

- Major: should fix before merge (architectural smell, perf regression, accessibility fail, missing error handling on a user path)

- Minor: fix when convenient (naming, small duplication, awkward types)

- Nit: take it or leave it (style preference, micro-optimisation)

Group findings by severity. If there are no blockers, say so up front so the author knows it's safe to merge after addressing the rest. Skip a severity heading entirely if its section is empty.

## What to actively look for

Architecture and boundaries: where state lives, module/layer boundaries, premature abstraction, leaky abstractions, server vs client split where the framework distinguishes them, route/handler vs action choice.

Framework specifics: idiomatic use of the framework's primitives at the version in play (hooks, components, lifecycle, suspense/streaming, hydration, server-only vs client-only modules).

Caching and rendering: caching defaults, force-dynamic overuse, metadata, asset optimisation, route configuration, parallel/intercepting routing where applicable.

Styling: arbitrary values where a token exists, design-token discipline (semantic vs primitive layers), opacity-tint pitfalls, utility misuse, CSS-config drift. Flag hardcoded hex/colours when a token would do.

Typography: any drift from the project's defined font stack.

Domain-sensitive data: handling of any sensitive or regulated data (PII, PHI, financial, secrets), logging, analytics, error reports, URLs, third-party transports. Flag anything that could leak it.

Accessibility: keyboard nav, focus states, aria, label associations, colour contrast, custom popovers/menus, multi-step or live-region announcements.

Performance: bundle bloat from over-importing UI libraries, large client components that could be server, unnecessary client-only directives, unmemoised expensive renders, image and font loading.

TypeScript: `any`, `as` casts, non-null assertions, missing discriminated unions where state is modelled as separate booleans, untyped boundary data (form payloads, fetch responses) extracted without runtime validation.

Spelling/locale: any drift from the project's chosen locale (en_US vs en_GB/en_AU, etc.).

Workflow rules from `CLAUDE.md` (or equivalent): code that violates project-wide conventions (e.g. directory-traversal anti-patterns, secret-file access, sleep/poll patterns, banned tooling shortcuts).

## How to deliver the review

Start with a one-line verdict ("ship it after the two minors", "blocker on the {area}, hold", etc). Then a 2-3 sentence summary of what changed and the reviewer's read on it. Then findings grouped by severity, each as: `file:line`, finding, why it matters, what to do. Quote the offending line if useful. Skip the severity heading if a section is empty. End with "Out of scope but worth noting" only if there's something genuinely worth flagging that wasn't part of the diff.

## Output location and filename

Every review is written to a file in `.plans/code-review/` at the repo root. Never dump the review into chat only, always write the file and then surface the verdict + key findings inline.

Filename format: `CR-XXX - scope - change-short-name.md`

- `CR-XXX` is a zero-padded sequential number (CR-001, CR-002, ...). Before writing, list `.plans/code-review/` and use the next number after the highest existing one. If the folder doesn't exist yet, create it and start at CR-001.

- `scope` is the area being reviewed (an app, package, or service name). If the change spans multiple scopes, use a name that captures the umbrella (e.g. `monorepo`). If it's not scope-specific (root config, CI, tooling), use `root`.

- `change-short-name` is a kebab-case 2-5 word summary of what changed (e.g. `auth-flow-refactor`, `submit-route-fix`, `picker-a11y`).

- Spaces around the dashes in the filename are intentional, match the format exactly.

Examples:

- `.plans/code-review/CR-001 - {scope} - {change-slug}.md`

- `.plans/code-review/CR-002 - {scope} - {change-slug}.md`

- `.plans/code-review/CR-003 - root - {change-slug}.md`

## File contents

The markdown file contains the full review (verdict, summary, findings by severity, out-of-scope notes). At the top of the file, include a small frontmatter-style header:

```

CR-XXX

Scope: <scope>

Change: <change short name>

Date: <YYYY-MM-DD>

Commit/branch: <short SHA or branch name if determinable from git, else "uncommitted">

Verdict: <one-line verdict>

```

Then the full review body below.

## What the reviewer must NOT do

- Don't refactor unprompted.

- Don't suggest renaming things just because.

- Don't pad the review with praise sandwiches.

- Don't list every minor inconsistency, pick the ones that matter.

- Don't recommend tests unless the change actually warrants them (a token swap doesn't need tests; a new API route does).

- Don't suggest extracting shared packages or restructuring the project layout unless the user asked for it.

## Output format

Plain markdown, no bolded headings, no excessive bullet nesting. Code references as backticks. File paths relative to repo root.

I run this on myself before I merge. The model wrote the code. I want a second pass with fresh eyes that aren't the same eyes that wrote it.

Do a CR on the uncommitted changes on this branch.

The skill reads CLAUDE.md to remember project conventions, runs git diff, reads the surrounding code (diffs miss context), and writes a file at .plans/code-review/CR-001 - campervan - location-search.md. One-line verdict at the top. Findings grouped by severity: blockers, majors, minors, nits.

The voice is what makes it useful. Principal engineer reviewing a colleague's PR. Direct. No "consider" weasel words. If something's fine, it says so and moves on. If something's wrong, it says why and what to do instead. Last week it caught a hardcoded hex where a token would've done, a route that should've been server-only but had 'use client' at the top, and a Promise<any> in a service file because I'd been lazy. None would've stopped the build. All would have annoyed me three weeks later when I'd forgotten the context.

testing-pyramid

---

name: testing-pyramid

description: Plan or audit a project's test coverage against the testing pyramid (unit / integration / e2e). Use when the user asks "what should we test", "is our coverage right", "are we over-testing", "are we missing tests", "what layer should this go in", or wants a review of an existing test suite for bloat or gaps. Outputs a coverage map, layer recommendations, and a concrete edit list, not a fresh test suite.

metadata:

argument-hint: <feature/area to test, FD ID, or "audit" to review the existing suite>

---

# Testing Pyramid

This skill plans or audits a project's test coverage using the testing pyramid as the rubric. It does NOT write tests, it produces a coverage map, identifies gaps and bloat, and outputs a prioritised edit list. Implementation is a separate task.

The pyramid the skill uses (informed by `apps/shakashuffle/`):

```

/\

/e2e\ few, cross-page flows, real DB, real cookies

/------\

/ inte- \ some, route-handler logic, RTL component renders

/ gration \

/------------\

/ unit \ many, pure functions, validators, reducers, hooks

/----------------\

```

Heuristic: **if a test would pass with `mockResolvedValue`, it doesn't belong in Playwright.**

## When to use

Trigger this skill when the user asks any of:

- "What tests should I write for X?"

- "Is our test coverage right?" / "Are we missing tests?"

- "Is this over-tested?" / "Why is CI so slow?"

- "What layer should this assertion go in?"

- "Audit the test suite" / "Review tests in `apps/<app>`"

- "Plan tests for FD-XXX"

Do NOT trigger this skill when:

- The user wants you to actually write the test code (use a normal task; cite this skill's output if one exists).

- The user is debugging a single failing test (just fix it).

- The user wants a code review of a single PR, that's a different task.

## Modes

The skill has three modes. Pick one based on the trigger phrase or ask if ambiguous.

### Mode A: **Plan**. Design tests for a new feature / FD

Input: a feature description, an FD path (e.g. `.plans/feature-development/FD-002 - …`), or "the changes on this branch."

Output: a markdown file at `.plans/testing-pyramid/TP-XXX - <app> - <short topic>.md` (numbered sequentially, find the highest existing `TP-NNN` and increment) containing:

1. **Layer rubric** (the standard table, copy verbatim).

2. **Surface area**, list every behaviour the feature introduces, one row per behaviour, columns: `Behaviour`, `Layer`, `Test file (proposed)`, `Notes`.

3. **Open questions**, only the judgement calls (e.g. "do we mock translations or use the real provider?"). Use the in-file checkbox format defined below.

4. **Files to create / modify** with a Pass column (P1/P2/P3) for ordering.

5. **Out of scope** so the plan stays bounded.

### Mode B: **Audit**. Review an existing test suite

Input: a directory (e.g. `apps/shakashuffle/tests/`) or "this app".

Output: a markdown file at `.plans/testing-pyramid/TP-XXX - <app> - audit.md` (numbered sequentially, find the highest existing `TP-NNN` and increment) containing:

1. **Pyramid shape**, counts by layer (e.g. unit: 18, integration: 0, e2e: 12) and a sentence on whether the shape is healthy. An inverted or hourglass pyramid is a finding.

2. **Bloat**, tests that are at the wrong layer, duplicates across layers, or assertions that prove nothing the next-layer-up doesn't already prove. Cite specific files and line ranges.

3. **Gaps**, behaviours with no test coverage at any layer, or critical paths covered only by flaky e2e specs.

4. **Flakes**, tests that have been quarantined, marked `.skip`, or have a history of intermittent failures (grep `it.skip`, `test.skip`, `// flaky`, `xit`, `xdescribe`).

5. **Concrete edits** ordered as Pass 1 (must), Pass 2 (should), Pass 3 (nice). Each cites a file path and a one-sentence rationale.

### Mode C: **Layer pick**. Which layer for this one test?

Input: a description of one assertion ("does pasting an invite code with too few chars keep the button disabled?").

Output: an inline answer (≤120 words). Layer + the cheapest test that proves it + the file path it would live in. No markdown file written.

## Layer rubric (canonical)

| Layer | What it proves | When to reach for it | Speed | Example (shakashuffle) |

|-------|----------------|----------------------|-------|------------------------|

| **Unit** (Vitest, `tests/unit/`) | Pure functions; validators; reducers; component branching with mocked deps; small hook logic | Behaviour fits a single module, no real DOM tree, no network. | <100ms | `tests/unit/invite-code-validation.test.ts`, `tests/unit/poker-consensus.test.ts`, `tests/unit/rate-limit.test.ts` |

| **Integration** (Vitest, `tests/integration/`) | Route-handler logic with real validators + mocked Supabase admin; React-Testing-Library renders of a single page with mocked hooks; assertion of branching, error states, focus, accessibility | Behaviour spans 2–3 modules, no real browser or live network. | 100–500ms | `tests/integration/route-handlers/api-squads-create.test.ts`, `tests/integration/components/NoSquadEmptyState.test.tsx` |

| **E2E** (Playwright, `tests/e2e/`) | Cross-page flows touching DOM + cookies + redirects + DB, value is in the *between-pages* movement, not leaf logic | Genuinely end-to-end behaviour. The test would lose its point if you stubbed any single layer. | seconds | `tests/e2e/welcome-chooser.spec.ts`, `tests/e2e/poker-table-empty-state.spec.ts` |

## Questions the skill asks while planning

Cap one round at ~3 in-file questions. Skip questions whose answers are derivable from the codebase (config files, existing patterns). Ask only judgement calls.

### In-file question format (mandatory)

Every open question MUST use this exact shape, discrete checkbox options, a recommendation inline on the recommended option, plus an "Other" slot and a notes block. Free-prose questions without options are a failure mode; do not write them.

```markdown

#### QX. {short question title}

{One or two sentences explaining the question and why it matters.}

- [ ] Option A: {short description of what this option means}

- [ ] Option B: {short description} **(recommended: {one-line reason})**

- [ ] Option C: {short description}

- [ ] Other (notes):

- _{your note here}_

**Notes / reasoning:**

- _{anything else worth recording about this pick}_

```

Rules:

- **Always offer concrete options.** Vague "how should we handle X?" is banned. If you can't think of two real options, the question isn't ready to ask.

- **Mark exactly one option `(recommended: …)`** with a one-line reason. Don't make the user derive your opinion.

- **Every question has an Other + Notes slot** so the user can override or annotate.

- **Questions are numbered** (Q1, Q1a, Q2, …) so chat can reference them.

- **Cap at ~3 per round.** Pick the questions that unblock the most other decisions.

### Common questions for testing plans

Use these as templates, adapt the options to the project, but keep the format above.

1. **Translation strategy.** Mock `useTranslations` to identity, or wrap RTL renders in `NextIntlClientProvider` with the real `messages/en.json`? (Default: real provider when missing-key bugs would be silent.)

2. **Vitest config split.** One config covering unit + integration, or a sibling `vitest.integration.config.ts`? (Default: split when CI parallelism matters or integration runs are slow.)

3. **Existing-test handling.** Leave existing tests, `git mv` + rewrite in place, or delete and rewrite from scratch? (Default: rewrite in place unless they're entirely about removed behaviour.)

4. **E2E scope.** Smoke (one happy path per surface) or full (every UX branch)? (Default: smoke.)

5. **Outbound HTTP stubbing.** MSW handlers, recorded fixtures, or a separate contract tool (pact / pactum)? (Default: MSW for consumer apps; pact only when you also own the provider.)

6. **DB strategy.** Module-mock the client, run a local DB container, or contract-stub the wire protocol? (Default: module-mock unless you have RLS/triggers worth exercising.)

## Bloat detection

Flag a test as bloat in the audit when:

- **Wrong layer.** A Playwright test that asserts a regex on rendered text from a single component, RTL would prove the same thing in 50ms. `tests/e2e/basic-functionality.spec.ts:42, "team members heading is visible"` is the canonical example.

- **Multi-layer duplicate.** The same assertion exists at unit + integration + e2e. Pick the cheapest layer that's still meaningful; delete the others.

- **Mock theatre.** A test that mocks every collaborator and asserts the mocks were called with the values you passed in. The behaviour under test is "the function calls its arguments", delete it.

- **Setup-heavy / assertion-light.** Setup ≥ 5× the assertion lines. Either the test is testing setup, or the unit under test has too many seams. Flag for either deletion or a refactor question.

- **Implementation snapshot.** Snapshot tests on rendered HTML that drift on every legitimate copy change. The signal-to-noise is low; delete unless the snapshot covers a real invariant (token usage, ARIA structure).

- **Coverage-percentage tests.** Tests written purely to bump a coverage percentage with no behavioural claim. Delete.

## Sufficiency detection

Flag a behaviour as under-tested when ALL of these are true:

- It's user-visible OR it crosses a system boundary (HTTP, DB, auth).

- A bug here would be discovered by a user, not by the next test that runs.

- No layer currently asserts the behaviour. (Coverage tools count line execution, not assertions, read the actual tests.)

Critical-path checklist (use as a prompt, not a checklist gate):

- Authentication flow: signin/signup happy path, error path, OAuth callback.

- Authorisation: the cheapest "user A can't see user B's data" test.

- Money path: anything that creates, modifies, or charges a Stripe subscription.

- Data write path: the API route that creates the most-queried table row (squads, sessions, etc.).

- Empty state: every page that has one. Empty states regress silently.

- Redirect chain: any flow with two or more redirects (auth callback is the usual culprit).

## Output format

### Mode A / Mode B output

A single markdown file at `.plans/testing-pyramid/TP-XXX - <app> - <topic|audit>.md` with these sections in order:

```markdown

# Test plan / audit, <subject>

## Pyramid shape (audit only)

{counts + one-sentence diagnosis}

## Layer rubric

{copy the canonical table verbatim}

## Surface area / Findings

{table per the mode's spec}

## Open questions

{checkbox blocks using the in-file question format above, REMOVE blocks once resolved; this section only contains live, unresolved questions}

## Decisions

{table populated as questions resolve, see Decisions table format below}

## Files to create / modify

| File | Pass | Type (create/modify/done) | Notes |

## Out of scope

{bullets}

```

The file is the durable artefact. Chat updates between cycles are ≤120 words.

### Mode C output

Inline answer only. Format:

> **Layer:** <unit | integration | e2e>

> **Why:** <one sentence>

> **File:** `<path>` (existing or new)

> **Why not the next layer up:** <one sentence, what mocks would still apply>

## Iteration loop (Mode A and Mode B)

Once the plan/audit file exists, this skill runs an in-file Q&A loop until the user signs off. No test code is written while the loop is running, the file is the artefact, chat is for terse status only.

### Beat 1: Read current state

Read the full plan file end to end. Identify:

- Ticked checkboxes and any inline notes the user added under questions

- Any new prose the user inserted into Surface area / Findings / Files-to-modify (a direct edit is a decision too)

- Sections that have drifted from current reality (e.g. file paths that have moved, layer choices the codebase no longer supports)

- The current `## Open questions` and `## Decisions` sections, what's answered, what isn't

### Beat 2: Process user answers (fold FIRST, then move)

For each question the user has resolved:

1. **Fold the answer into every section of the plan it affects**, Surface area rows, Files to create / modify, layer notes, prose. The plan must read consistently as if the decision was always there. Orphaned answers sitting in `## Open questions` while the body still hedges is the most common failure mode of this skill.

2. **Append the decision to the `## Decisions` table** (format below).

3. **Remove the resolved question block from `## Open questions`**, do not leave ticked checkboxes hanging around. The section should only ever contain *unresolved* questions.

The order matters: fold first, then record, then prune. Skipping the fold-in step leaves the plan contradictory.

Watch for answers that contradict things written in earlier cycles. Reconcile explicitly, never silently keep the old wording.

### Beat 3: Keep progress live

Update `## Pyramid shape (current)` after each implementation pass, actual file counts per layer plus a one-line note on what's done and what remains.

For the `## Files to create / modify` table, mark rows as you complete them, flip the `Type` column from `create` / `modify` to `done`, or add a `✓` prefix. Don't delete rows; the table is a checklist the user reads to see what's left.

### Beat 4: Raise new questions

Answers almost always raise new questions. Surface them **in the plan file** using the in-file question format above (NOT only in chat). Cap one round at ~3 questions, pick the ones that unblock the most other decisions. If you have more than three, hold the rest for the next cycle.

### Beat 5: Report back and wait

Send the user a terse message (≤120 words) covering:

- What was folded in based on their answers

- What new questions were raised (by ID) and where in the file to find them

- One unilateral decision made if any, flag it explicitly so the user can override

Then stop. The user will either answer the new questions (another cycle begins) or sign off.

## Decisions table format

`## Decisions` is a **table**, not a list, columns: `✅`, `Question`, `Decision`, `Why`. Keep each `Why` cell to one sentence so the table scans fast. Order rows by when each question was raised (not alphabetically), the order itself carries information about how the design evolved.

Example:

```markdown

## Decisions

| ✅ | Question | Decision | Why |

|----|----------|----------|-----|

| ✅ | Q1, Translation strategy | Wrap RTL renders in `NextIntlClientProvider` with real `messages/en.json` | Missing-key bugs would otherwise be silent |

| ✅ | Q2, Vitest config split | Single `vitest.config.ts` covering unit + integration | CI parallelism not yet a bottleneck |

```

## Sign-off gate (Mode A and Mode B)

Don't declare the plan/audit done until ALL of the following are true:

1. Every question in the file is answered, no unticked checkboxes in `## Open questions`, no `{your note here}` placeholders the user was expected to fill.

2. The plan sections (Surface area / Findings, Files to create / modify, Pyramid shape, etc.) are self-consistent, you could hand the document to someone cold and they could execute it without re-asking the user.

3. The user has explicitly confirmed they want to proceed. Common sign-off phrases: "looks good, start writing", "go", "implement", "ship it", "approved". If the user's latest message doesn't clearly sign off, ask: "Is this ready to implement, or do you want another pass?", one sentence, then stop.

Until all three are true, stay in the loop. Do not start writing test files. Do not even read the implementation source files unless they're needed to answer a planning question.

## Hard rules

- **No code edits.** Test files are written in a separate task; this skill produces the plan only.

- **Cite real file paths.** When recommending a layer or pointing at bloat, name the file. Don't say "some e2e test", say `tests/e2e/basic-functionality.spec.ts:42`.

- **Don't prescribe coverage percentages.** They're the wrong metric. Talk in behaviours and risk.

- **Don't recommend snapshot tests.** Unless the snapshot is over a structural invariant (ARIA tree, token list) the user can defend.

- **One layer per behaviour.** If the surface-area or findings table lists the same behaviour at two layers, justify it in the Notes column or pick one.

- **Respect project-wide preferences in memory.** If memory says "no fallbacks / no deprecation comments," the audit must flag deprecation-comment cruft in tests as bloat.

- **Always fold answers into the main plan.** Orphaned answers in `## Open questions` while the surface-area / findings table is stale is the most common failure mode of this skill, guard against it.

- **Never hide questions from the user by asking them only in chat.** If it's a decision that shapes the plan, it goes in the file with options + a notes slot. Chat is for terse status updates between cycles.

- **Watch for project-wide feedback while iterating.** If the user shares something that clearly applies beyond this plan ("I prefer X over Y everywhere"), save it to auto-memory in the same cycle it's folded into the plan, don't wait for a separate invitation.

- **Keep update messages terse.** The plan file is the durable artefact. Chat messages between cycles should be ≤120 words and never repeat content that's already in the file.

- **Ask before guessing.** If an answer is ambiguous, raise it as a new question in the next cycle rather than picking unilaterally. If the decision is tiny and blocks progress, flag the unilateral pick explicitly in the chat summary so the user can override.

## Reference: shakashuffle test layout

For new monorepo apps, mirror this shape:

```

apps/<app>/

tests/

unit/ # pure logic, validators, hooks (mocked deps)

*.test.ts

integration/ # route handlers + RTL component renders

route-handlers/

api-*.test.ts

components/

*.test.tsx

e2e/ # Playwright, cross-page only

*.spec.ts

global-setup.ts

helpers/

vitest.config.ts # unit

vitest.integration.config.ts # integration (separate so CI can split)

playwright.config.ts

test-setup.ts

test-setup-components.ts # exports renderWithIntl()

```

If an app deviates, that deviation is a finding in the audit unless there's a documented reason in the app's `CLAUDE.md`.

Two modes. "What should I test for this feature" before I write the tests, or "audit the existing suite" when CI starts feeling sluggish.

Plan tests for FD-001.

Output goes to .plans/testing-pyramid/TP-001 - campervan - location-search.md. It reads the FD, lists every behaviour the feature introduces in a table, and assigns each one to a layer: unit, integration, or e2e. The heuristic is brutally simple: if a test would pass with mockResolvedValue, it doesn't belong in Playwright.

Audit mode has saved my CI bill more than once. Looking at a suite and going "eighteen unit tests, zero integration tests, twelve e2e tests" is the kind of feedback I'd never give myself, because I'm the one who wrote the suite.

Wrapping up

Four skills. One pattern. Markdown files in .plans/, in-file checkbox questions, fold-in loops, agents that stop and wait instead of running off and writing 400 lines I didn't ask for.

Is this overkill for a weekend project? Probably. Do it anyway. The discipline of writing the context down, even briefly, is what makes the agent useful instead of dangerous.

The room, not the words in it.